It’s hard to remember a world without blogs. Originally a sort of online journal full of mundane personal updates, web logs

have morphed into an extremely powerful form of communication.

They were once shunned by the mainstream —

now they are the mainstream.

_

The story I’m living,

these days, is about competence and I think most people speaking at testing

conferences are not competent enough. A lot of what’s talked about at testing

conferences is the muttering of idiots. By idiot, I mean functionally stupid people: people who choose not to use their minds to find excellent solutions to

important problems, but instead speak ritualistically and uncritically about monsters

and angels and mathematically invalid metrics and fraudulent standards and other useless or sinister tools that are designed

to amaze and confuse the ignorant.

I want to see at least 50% of people speaking about

testing to be competent. That’s my goal. I think it is achievable, but it will

take a lot of work. We are up against an entrenched and powerful interest: the

promoters-of-ineptness (few of whom realize the damage they do) who run the

world and impose themselves on our craft.

Why are there so many idiots and why do they run the

world? The roots and dynamics of idiocracy are deep. It’s a good question but I

don’t want to go into it here and now.

There are important challenges to being a remote

tester, though. The main technical problem, in my experience, is acquiring and

configuring the product to test. Getting up to speed fast benefits from being

onsite. The main social problem is trust. Hiring a remote tester, especially

one who’s supposed to be high powered, is a little like hiring a therapist. In

my experience, developers feel more sensitive about a well-paid, high status

outsider poking at their work, than they do about an internal tester who

probably will disappear in a few weeks.

Then there’s communication. Despite all the tools for

modern communication, we haven’t yet developed a culture of remote interaction

that lets us use those tools effectively. Even though I’m always on Skype, and

people can see I’m on Skype, they still ask permission to call me on Skype! And

I also feel nervous about calling other people on Skype. They might be annoyed

with me. I do use GoToMeeting, and that helps a lot. Kevin Daron and I have

been collaboratively writing, recently, using it. We like it.

Finally, there is one big logistical problem:

availability. You can call me up and have me test for you. But, being a fully

independent consultant, my time is chopped up. I have a week here and a few

days there, usually. This is the main reason I go in for short-term consulting

and coaching. Unless a rich client comes along and induces me to clear my

schedule, I can’t afford to have only that one client.

Now,

I will try to give you a S3 idea on this topic, not galaxy S3, it is the idea

that a mentor give to his students to understand the problems.

SIMPLE

STRAIGHT

SOLID

This S3 idea will allow you to understand the logic about Testing.

Hello everyone, though it has been a long time to

back here and missed a lot of things ,hope it won’t cut serious, but still visual that response is stubborn

everywhere . Pleased to see an excellent

response and appreciation on my blog and website, I got many feedback and suggestions on blog, mail, Facebook every time .crossing over many more visitors

in these days. Thank you all for appreciating it.

I am telling you the truth why I am hitting Google

or many search engines on remarkable views.

It is only and only possible because of you people, thank you so much for your appreciation,

I will keep writing till my last breath for you all.

God bless…

1. Introduction

(SE).

2. SDLC (Software development life cycle).

3. Software Models.

4. Software Testing.

5. Levels of testing.

6. Types

of testing.

7. Testing

Prototypes.

8. Testing V&V.

9. Advantages of software testing.

10. Contact.

The notion of

‘software engineering’ was first proposed in 1968 at a conference held to

discuss what was then called the ‘software crisis’ (Naur and Randell, 1969). It

became clear that individual approaches to program development did not scale up

to large and complex software systems. These were unreliable, cost more than expected,

and were delivered late.

Throughout the

1970's and 1980's, a variety of new software engineering techniques and methods

were developed, such as structured programming, information hiding and object-oriented

development. Tools and standard notations were developed and are now

extensively used.

Lots of people

write programs. People in business write spreadsheet programs to simplify their

jobs, scientists and engineers write programs to process their experimental data,

and hobbyists write programs for their own interest and enjoyment.

However, the vast

majority of software development is a professional activity where software is

developed for specific business purposes, for inclusion in other devices, or as

software products such as information systems, CAD systems, etc. Professional software,

intended for use by someone apart from its developer, is usually developed

by teams rather

than individuals. It is maintained and changed throughout its life.

Software

engineering is intended to support professional software development, rather

than individual programming. It includes techniques that support program specification,

design, and evolution, none of which are normally relevant for personal software

development. To help you to get a broad view of what software engineering is

about, I have summarized some frequently asked questions.

Many people think

that software is simply another word for computer programs.

However, when we

are talking about software engineering, software is not just the programs

themselves but also all associated documentation and configuration data that is

required to make these programs operate correctly. A professionally developed software

system is often more than a single program. The system usually consists of a

number of separate programs and configuration files that are used to set up

these programs. It

may include system documentation, which describes the structure of the system;

user documentation, which explains how to use the system, and websites for

users to download recent product information.

This is one of the

important differences between professional and amateur software development. If

you are writing a program for yourself, no one else will use it and you don’t

have to worry about writing program guides, documenting the program design,

etc. However, if you are writing software that other people will use and other

engineers will change then you usually have to provide additional information

as well as the code

of the program.

//According to wiki:

Software

engineering is the study and an

application of engineering to the design, development, and maintenance of software.

Typical

formal definitions of software

engineering are:

·

"research, design, develop, and

test operating systems-level software, compilers, and network distribution

software for medical, industrial, military, communications, aerospace, business,

scientific, and general computing applications."

·

"the systematic application of

scientific and technological knowledge, methods, and experience to the design,

implementation, testing, and documentation of software";

·

"the application of a

systematic, disciplined, quantifiable approach to the development, operation,

and maintenance of software".

·

"an engineering discipline that

is concerned with all aspects of software production";

·

and "the establishment and use

of sound engineering principles in order to economically obtain software that

is reliable and works efficiently on real machines.

The three Ps: People, Product and

Process.

People are a very

important aspect of software engineering and software systems. People use the

system being developed, people design the system, people build the system,

people maintain the system, and people pay for the system. Software engineering

is as much about the organization and management of people as it is about

technology. There are typically two types of software product:

Generic products: These are

stand-alone systems that are produced by a development organization and sold in

the open market to any customer who wants to buy it.

Bespoke (customized) products: These

are systems that are commissioned by a specific customer and developed

specially by some contractor to meet a special need.

Most software expenditure is on

generic products by most development effort is on be spoke systems.

The trend is towards the

development of bespoke systems by integrating generic components (that must

themselves be interoperable). This requires two of the non-functional

properties (one of the ‘ileitis’) mentioned earlier: compos ability and

interoperability.

The software process is a structured set of activities required to

develop a software system:

•

Specification

•

Design

•

Validation

•

Evolution

These activities vary depending

on the organization and the type of system being developed.

There are several different

process models and the correct model must be chosen to match the organization

and the project.

Let’s now look more deeply at the different types of

software process.

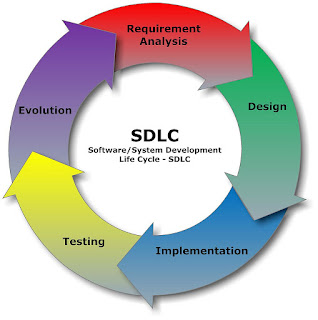

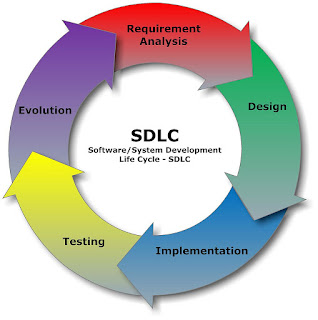

2. SDLC

(Software development life cycle):

Any product development can be

expected to proceed as an organized process that usually includes the following

phases:

· Planning /

Specification

· Design

· Implementation

· Evaluation

|

| Software development cycle |

Stage 1: Planning and Requirement Analysis

Requirement analysis is the most important and fundamental stage

in SDLC. It is performed by the senior members of the team with inputs from the

customer, the sales department, market surveys and domain experts in the

industry. This information is then used to plan the basic project approach and

to conduct product feasibility study in the economical, operational, and

technical areas.

Planning for the quality assurance requirements and identification

of the risks associated with the project is also done in the planning stage.

The outcome of the technical feasibility study is to define the various

technical approaches that can be followed to implement the project successfully

with minimum risks.

Stage 2: Defining Requirements

Once the requirement analysis is done the next step is to clearly

define and document the product requirements and get them approved from the

customer or the market analysts. This is done through .SRS. . Software

Requirement Specification document which consists of all the product

requirements to be designed and developed during the project life cycle.

Stage 3: Designing the product architecture

SRS is the reference for product architects to come out with the

best architecture for the product to be developed. Based on the requirements

specified in SRS, usually more than one design approach for the product

architecture is proposed and documented in a DDS - Design Document

Specification.

This DDS is reviewed by all the important stakeholders and based

on various parameters as risk assessment, product robustness, design modularity

, budget and time constraints , the best design approach is selected for the

product.

A design approach clearly defines all the architectural modules of

the product along with its communication and data flow representation with the

external and third party modules (if any). The internal design of all the

modules of the proposed architecture should be clearly defined with the

minutest of the details in DDS.

Stage 4: Building or Developing the Product

In this stage of SDLC the actual development starts and the

product is built. The programming code is generated as per DDS during this

stage. If the design is performed in a detailed and organized manner, code

generation can be accomplished without much hassle.

Developers have to follow the coding guidelines defined by their

organization and programming tools like compilers, interpreters, debuggers etc

are used to generate the code. Different high level programming languages such

as C, C++, Pascal, Java, and PHP are used for coding. The programming language

is chosen with respect to the type of software being developed.

Stage 5: Testing the Product

This stage is usually a subset of all the stages as in the modern

SDLC models, the testing activities are mostly involved in all the stages of

SDLC. However this stage refers to the testing only stage of the product where

products defects are reported, tracked, fixed and retested, until the product

reaches the quality standards defined in the SRS.

Stage 6: Deployment in the Market and Maintenance

Once the product is tested and ready to be deployed it is released

formally in the appropriate market. Sometime product deployment happens in

stages as per the organizations. business strategy. The product may first be

released in a limited segment and tested in the real business environment (UAT-

User acceptance testing).

Then based on the feedback, the product may be released as it is

or with suggested enhancements in the targeting market segment. After the

product is released in the market, its maintenance is done for the existing

customer base.

3. Software Models:

There are various software development life cycle models defined

and designed which are followed during software development process. These

models are also referred as "Software Development Process Models".

Each process model follows a Series of steps unique to its type, in order to

ensure success in process of software development.

Following are the most important and popular SDLC models followed

in the industry:

·

Waterfall

Model

·

Iterative

Model

·

Spiral

Model

·

V-Model

·

Big

Bang Model

The other related methodologies are Agile Model, RAD Model, Rapid

Application Development and Prototyping Models. The most widely used model is Waterfall and Spiral.

Providing a link which explains these models in detail:

4. Software

Testing:

I hope you

have got idea about software engineering, now discussing testing in software

engineering.

Software

testing is an investigation process to check the software against requirements gathered from users and

system specifications. Testing is conducted at the phase level in software

development life cycle or at module level in program code. Software testing

comprises of Validation and Verification.

Now some basic

difference between V&V:

S.N.

|

Verification

|

Validation

|

1

|

Verification addresses the concern:

"Are you building it right?"

|

Validation addresses the concern: "Are

you building the right thing?"

|

2

|

Ensures that the software system meets all

the functionality.

|

Ensures that the functionalities meet the

intended behavior.

|

3

|

Verification takes place first and includes

the checking for documentation, code, etc.

|

Validation occurs after verification and

mainly involves the checking of the overall product.

|

4

|

Done by developers.

|

Done by testers.

|

5

|

It has static activities, as it includes

collecting reviews, walkthroughs, and inspections to verify software.

|

It has dynamic activities, as it includes

executing the software against the requirements.

|

6

|

It is an objective process and no subjective

decision should be needed to verify a software.

|

It is a subjective process and involves

subjective decisions on how well a software works.

|

\\

According to wiki:

Software testing is

an investigation conducted to provide stakeholders with information about the

quality of the product or service under test. Software testing can also

provide an objective, independent view of the software to

allow the business to appreciate and understand the risks of software implementation.

Test techniques include the process of executing a program or application with

the intent of finding software bugs (errors or other defects).

It

involves the execution of a software component or system component to evaluate

one or more properties of interest. In general, these properties indicate the

extent to which the component or system under test:

·

meets the requirements that guided

its design and development,

·

responds correctly to all kinds of

inputs,

·

performs its functions within an

acceptable time,

·

is sufficiently usable,

·

can be installed and run in its

intended environments, and

·

Achieves the general result its

stakeholder’s desire.

5. Levels of

testing:

There are four levels of

testing:

·

Unit testing

·

Integration testing

·

System testing

·

Acceptance testing.

Unit Testing is a level of the software testing process where

individual units/components of a software/system are tested. The purpose is to

validate that each unit of the software performs as designed.

Integration

Testing is a level of the software

testing process where individual units are combined and tested as a group. The

purpose of this level of testing is to expose faults in the interaction between

integrated units.

System

Testing is a level of the software

testing process where a complete, integrated system/software is tested. The

purpose of this test is to evaluate the system’s compliance with the specified

requirements.

Acceptance

Testing is a level of the software

testing process where a system is tested for acceptability. The purpose of this

test is to evaluate the system’s compliance with the business requirements and

assess whether it is acceptable for delivery.

6. Types of testing:

There are

3 types of software testing:

- White box testing.

- Black box testing.

- Grey box testing.

Criteria

|

Black Box Testing

|

White Box Testing

|

Definition

|

Black

Box Testing is a software testing method in which the internal structure/

design/ implementation of the item being tested is NOT known to the tester

|

White

Box Testing is a software testing method in which the internal structure/

design/ implementation of the item being tested is known to the tester.

|

Levels

Applicable To

|

Mainly

applicable to higher levels of testing: Acceptance

System

Testing

|

Mainly

applicable to lower levels of testing: Unit

Integration

Testing

|

Responsibility

|

Generally,

independent Software Testers

|

Generally,

Software Developers

|

Programming

Knowledge

|

Not

Required

|

Required

|

Implementation

Knowledge

|

Not

Required

|

Required

|

Basis

for Test Cases

|

Requirement

Specifications

|

Detail

Design

|

\\

According to wiki:

White-box

testing (also known as clear box testing, glass box testing, transparent box testing and structural testing) tests internal

structures or workings of a program, as opposed to the functionality exposed to

the end-user. In white-box testing an internal perspective of the system, as

well as programming skills, are used to design test cases. The tester chooses

inputs to exercise paths through the code and determine the appropriate

outputs. This is analogous to testing nodes in a circuit, e.g. in-circuit

testing (ICT).

While

white-box testing can be applied at the unit, integration and system levels

of the software testing process, it is usually done at the unit level. It can

test paths within a unit, paths between units during integration, and between

subsystems during a system–level test. Though this method of test design can

uncover many errors or problems, it might not detect unimplemented parts of the

specification or missing requirements.

Techniques

used in white-box testing include:

·

API testing –

testing of the application using public and private APIs (application

programming interfaces)

·

Code coverage –

creating tests to satisfy some criteria of code coverage (e.g., the test

designer can create tests to cause all statements in the program to be executed

at least once)

·

Fault

injection methods – intentionally introducing faults to gauge

the efficacy of testing strategies

·

Mutation

testing methods

·

Static

testing methods

Code

coverage tools can evaluate the completeness of a test suite that was created

with any method, including black-box testing. This allows the software team to

examine parts of a system that are rarely tested and ensures that the most

important function points have been tested. Code

coverage as a software metric can be reported as a

percentage for:

·

Function

coverage, which reports on functions

executed

·

Statement

coverage, which reports on the number of

lines executed to complete the test

·

Decision

coverage, which reports on whether both the

True and the False branch of a given test has been executed

100%

statement coverage ensures that all code paths or branches (in terms of control flow)

are executed at least once. This is helpful in ensuring correct functionality,

but not sufficient since the same code may process different inputs correctly

or incorrectly.

******************************************************

Black-box

testing treats the software as a

"black box", examining functionality without any knowledge of

internal implementation. The testers are only aware of what the software is

supposed to do, not how it does it. Black-box testing methods include: equivalence partitioning, boundary value analysis, all-pairs

testing, state transition tables, decision

table testing, fuzz testing, model-based testing, use case testing, exploratory testing and specification-based

testing.

*******************************************************

Gray Box Testing is a software

testing method which is a

combination of Black Box

Testing method and White Box

Testing method. In

Black Box Testing, the internal structure of the item being tested is unknown to

the tester and in White Box Testing the internal structure in known. In Gray

Box Testing, the internal structure is partially known. This involves having

access to internal data structures and algorithms for purposes of designing the

test cases, but testing at the user, or black-box level.

Gray Box

Testing is named so because the software program, in the eyes of the tester is

like a gray/ semi-transparent box; inside which one can partially see.

EXAMPLE:

An example

of Gray Box Testing would be when the codes for two units/ modules are studied

(White Box Testing method) for designing test cases and actual tests are

conducted using the exposed interfaces (Black Box Testing method).

LEVELS

APPLICABLE TO:

Though

Gray Box Testing method may be used in other levels of testing, it is primarily

useful in Integration.

SPELLING:

Note that Gray is also

spelt as Grey. Hence

Grey Box Testing and Gray Box Testing mean the same.

*******************************************************

Other

testing types:

Compatibility testing:

A

common cause of software failure (real or perceived) is a lack of its compatibility with other application software, operating

systems (or operating

system versions, old or new), or target environments

that differ greatly from the original (such as a terminal or GUI application intended to be run on the desktop now being required to become a web

application, which must render in a web browser).

For example, in the case of a lack of backward compatibility, this can occur

because the programmers develop and test software only on the latest version of

the target environment, which not all users may be running. This result in the

unintended consequence that the latest work may not function on earlier versions

of the target environment, or on older hardware that earlier versions of the

target environment was capable of using. Sometimes such issues can be fixed by

proactively abstracting operating system functionality into a

separate program module or library.

Smoke and sanity testing:

Sanity testing determines whether it is reasonable to

proceed with further testing.

Smoke testing consists of minimal attempts to

operate the software, designed to determine whether there are any basic

problems that will prevent it from working at all. Such tests can be used as build verification test.

Regression

testing:

Regression

testing focuses on finding defects after a major code change has occurred.

Specifically, it seeks to uncover software regressions, as degraded or lost

features, including old bugs that have come back. Such regressions occur

whenever software functionality that was previously working, correctly, stops

working as intended. Typically, regressions occur as an unintended consequence of program changes, when the newly

developed part of the software collides with the previously existing code.

Common methods of regression testing include re-running previous sets of

test-cases and checking whether previously fixed faults have re-emerged. The

depth of testing depends on the phase in the release process and the risk of the added features. They can either

be complete, for changes added late in the release or deemed to be risky, or be

very shallow, consisting of positive tests on each feature, if the changes are

early in the release or deemed to be of low risk. Regression testing is

typically the largest test effort in commercial software development,due to checking numerous details in

prior software features, and even new software can be developed while using

some old test-cases to test parts of the new design to ensure prior

functionality is still supported.

Acceptance

testing:

Acceptance

testing can mean one of two things:

1.

A smoke test is used as an acceptance test prior to

introducing a new build to the main testing process, i.e. before integration or regression.

2.

Acceptance

testing performed by the customer, often in their lab environment on their own

hardware, is known as user acceptance testing (UAT). Acceptance testing may be

performed as part of the hand-off process between any two phases of

development.

Alpha

testing:

Alpha testing is simulated or actual operational

testing by potential users/customers or an independent test team at the

developers' site. Alpha testing is often employed for off-the-shelf software as

a form of internal acceptance testing, before the software goes to beta testing.

Beta

testing:

Beta testing comes after alpha testing and can be

considered a form of external user acceptance testing. Versions of the

software, known as beta versions,

are released to a limited audience outside of the programming team known as

beta testers. The software is released to groups of people so that further

testing can ensure the product has few faults or bugs.

Beta versions can be made available to the open public to increase the feedback field to a maximal number of future

users and to deliver value earlier, for an extended or even infinite period of

time (perpetual beta).

Functional vs non-functional testing:

Functional testing refers to activities that verify a

specific action or function of the code. These are usually found in the code

requirements documentation, although some development methodologies work from

use cases or user stories. Functional tests tend to answer the question of

"can the user do this" or "does this particular feature

work."

Non-functional testing refers to aspects of the

software that may not be related to a specific function or user action, such as scalability or other performance,

behavior under certain constraints, or security.

Testing will determine the breaking point, the point at which extremes of

scalability or performance leads to unstable execution. Non-functional

requirements tend to be those that reflect the quality of the product,

particularly in the context of the suitability perspective of its users.

Destructive

testing:

Destructive

testing attempts to cause the software or a sub-system to fail. It verifies

that the software functions properly even when it receives invalid or

unexpected inputs, thereby establishing the robustness of input validation and

error-management routines. Software

fault injection, in the form of fuzzing,

is an example of failure testing. Various commercial non-functional testing

tools are linked from the software

fault injection page;

there are also numerous open-source and free software tools available that

perform destructive testing.

Software performance testing:

Performance testing is generally executed to determine how

a system or sub-system performs in terms of responsiveness and stability under

a particular workload. It can also serve to investigate measure, validate or

verify other quality attributes of the system, such as scalability, reliability

and resource usage.

Load testing is primarily concerned with testing that the system

can continue to operate under a specific load, whether that be large quantities

of data or a large number of users.

This is generally referred to as software scalability.

The related load testing activity of when performed as a non-functional

activity is often referred to as endurance testing. Volume is a way to test software functions

even when certain components (for example a file or database) increase

radically in size. Stress

testing is a

way to test reliability under unexpected or rare workloads. Stability

testing (often referred to

as load or endurance testing) checks to see if the software can continuously

function well in or above an acceptable period.

There is little agreement on what the specific goals

of performance testing are. The terms load testing, performance testing, scalability testing, and volume testing, are

often used interchangeably.

Real-time software systems have strict timing

constraints. To test if timing constraints are met, real-time

testing is used.

Usability

testing:

Usability

testing is to check if

the user interface is easy to use and understand. It is concerned mainly with

the use of the application.

Accessibility

testing:

Accessibility testing

may include compliance with standards such as:

·

Americans with Disabilities Act of

1990

·

Section 508

Amendment to the Rehabilitation Act of 1973

·

Web Accessibility Initiative (WAI) of the World Wide Web Consortium (W3C)

Security

testing:

Security

testing is essential

for software that processes confidential data to prevent system intrusion by hackers.

The International Organization for Standardization

(ISO) defines this as a "type of testing conducted to evaluate the degree

to which a test item, and associated data and information, are protected to

that unauthorized persons or systems cannot use, read or modify them, and

authorized persons or systems are not denied access to them."

Internationalization and localization:

The general ability of software to be internationalized and localized can be automatically tested without

actual translation, by using pseudo localization. It will verify that the

application still works, even after it has been translated into a new language

or adapted for a new culture (such as different currencies or time zones).

Actual translation to human languages must be tested,

too. Possible localization failures include:

·

Software

is often localized by translating a list of strings out

of context, and the translator may choose the wrong translation for an

ambiguous source string.

·

Technical

terminology may become inconsistent if the project is translated by several

people without proper coordination or if the translator is imprudent.

·

Literal

word-for-word translations may sound inappropriate, artificial or too technical

in the target language.

·

UnTranslated

messages in the original language may be left hard coded in the source code.

·

Some

messages may be created automatically at run time and the resulting string may be

ungrammatical, functionally incorrect, misleading or confusing.

·

Software

may use a keyboard

shortcut which has no

function on the source language's keyboard

layout, but is used for typing characters in the layout of the

target language.

·

Software

may lack support for the character encoding of the target language.

·

Fonts

and font sizes which are appropriate in the source language may be

inappropriate in the target language; for example, CJK

characters may become

unreadable if the font is too small.

·

A

string in the target language may be longer than the software can handle. This

may make the string partly invisible to the user or cause the software to crash

or malfunction.

·

Software

may lack proper support for reading or writing bi-directional text.

·

Software

may display images with text that was not localized.

·

Localized

operating systems may have differently named system configuration files and environment variables and different formats for date and currency.

Development

testing:

Development

Testing is a software development process that involves synchronized

application of a broad spectrum of defect prevention and detection strategies

in order to reduce software development risks, time, and costs. It is performed

by the software developer or engineer during the construction phase of the

software development lifecycle. Rather than replace traditional QA focuses, it

augments it. Development testing aims to eliminate construction errors before

code is promoted to QA; this strategy is intended to increase the quality of

the resulting software as well as the efficiency of the overall development and

QA process.

Depending on the organization's expectations for

software development, Development Testing might include static code analysis, data flow analysis,

metrics analysis, peer code reviews, unit testing, code coverage analysis,

traceability, and other software verification practices.

A/B

testing:

A/B

testing is basically a comparison of two outputs, generally when only one

variable has changed: run a test, change one thing, run the test again, compare

the results. This is more useful with more small-scale situations, but very

useful in fine-tuning any program. With more complex projects, multivariant

testing can be done.

Concurrent

testing:

In

concurrent testing, the focus is more on what the performance is like when

continuously running with normal input and under normal operation as opposed to

stress testing, or fuzz testing. Memory leak is more easily found and resolved

using this method, as well as more basic faults.

Conformance testing or type testing:

In

software testing, conformance testing verifies that a product performs

according to its specified standards. Compilers, for instance, are extensively

tested to determine whether they meet the recognized standard for that

language.

**********************************************************

7. Testing Prototypes:

Prototype Testing is conducted with the

intent of finding defects before the website goes live. Online Prototype

Testing allows seamlessly to collect quantitative, qualitative, and behavioral

data while evaluating the user experience.

·

Test Cases.

·

Test suites.

·

Test Script.

·

Test Plan.

8.

Testing V&V:

Verification: Have we built the software right? (i.e., does it implement

the requirements).

Validation: Have we built the right software? (i.e., do the deliverables

satisfy the customer).

Before stating Review, Walkthrough

and inspection why not we do understand static and dynamic testing and how

these three are divided in to these two.

So let’s have a look on Static and

Dynamic Testing.

- Static Testing v/s Dynamic

Testing

Static testing is done basically to test the software work products,

requirement specifications, test plan , user manual etc. They are not executed,

but tested with the set of some tools and

processes. It provides a powerful way to improve the quality and productivity of

software development.

Dynamic Testing is basically when execution is done on the software

code as a technique to detect defects and to determine quality attributes of

the code. With dynamic testing methods,

software is executed using a set of inputs and its output is then compared to

the the expected results.

- Static Review and its

advantages

Static Review provides a powerful

way to improve the quality and productivity of software development to

recognize and fix their own defects early in the software development process.

Nowadays, all software organizations are conducting reviews in all major

aspects of their work including requirements, design, implementation, testing,

and maintenance.

Advantages

of Static Reviews:-

1. Types of defects that can be

found during static testing are: deviations from standards, missing

requirements, design defects, non-maintainable code and inconsistent

interface specifications.

2. Since static testing can start

early in the life cycle, early feedback on quality issues can be established,

e.g. an early validation of user requirements and not just late in the life

cycle during

acceptance testing.

3. By detecting defects at an early

stage, rework costs are relatively low and thus a relatively cheap improvement

of the quality of software products can be achieved.

4. The feedback and suggestions

document from the static testing process allows for process improvement, which

supports the avoidance of similar errors being made in the future.

- Roles and

Responsibilities in a Review:

There are various roles and

responsibilities defined for a review process. Within a review team, four types

of participants can be distinguished: moderator, author, scribe, reviewer and

manager. Let’s discuss their roles one by one:-

1. The moderator: The

moderator (or review leader) leads the review process. His role is to determine

the type of review, approach and the composition of the review team. The

moderator also

schedules the meeting, disseminates documents before the meeting, coaches other

team members, paces the meeting, leads possible discussions and stores the data

that is collected.

2. The author: As

the writer of the ‘document under review’, the author’s basic goal should be to

learn as much as possible with regard to improving the quality of the document.

The author’s

task is to illuminate unclear areas and to understand the defects found.

3. The scribe/ recorder:

The scribe (or recorder) has to record each defect found and any suggestions or

feedback given in the meeting for process improvement.

4. The reviewer: The role of the reviewers is to

check defects and further improvements in accordance to the business

specifications, standards and domain knowledge.

5. The manager : Manager is involved in the reviews

as he or she decides on the execution of reviews, allocates time in project

schedules and determines whether review process objectives

have been met or not.

- Phases of a formal Review:

A formal review takes place in a

piecemeal approach which consists of 6 main steps. Let’s discuss about these phases

one by one.

1.

Planning

The review process for a particular

review begins with a ‘request for review’ by the author to the moderator (or

inspection leader). A moderator is often assigned to take care of the

scheduling (dates, time, place and invitation) of the review. The project

planning needs to allow time for review and rework activities, thus providing

engineers with time to thoroughly participate in reviews. There is an

entry check performed on the documents and it is decided that which documents

are to be considered or not. The document size, pages to be checked,composition

of review team, roles of each participant, strategic approach are decided

into planning phase.

2.

Kick-Off

The goal of this meeting is to get

everybody on the same page regarding the document under review. Also the result

of the entry and exit criteria are discussed. Basically, During the kick-off

meeting, the reviewers receive a short introduction on the objectives of the

review and the documents. Role assignments, checking rate, the pages to be

checked, process changes and possible other questions are also discussed during

this meeting. Also, the distribution of the document under review, source

documents and other related documentation, can also be done during the kick-off.

3.

Preparation

In this phase, participants work

individually on the document under review using the related documents,

procedures, rules and checklists provided. The individual participants identify

defects, questions and comments, according to their understanding of the

document and role. Spelling mistakes are recorded on the document under review

but not mentioned during the

meeting. The annotated document will be given to the author at the end of the

logging meeting. Using checklists during this phase can make reviews more

effective and efficient.

4. Review

Meeting

This meeting typically consists of

the following elements:-

-logging phase

-discussion phase

-decision phase.

During the logging phase the issues,

e.g. defects, that have been identified during the preparation are mentioned

page by page, reviewer by reviewer and are logged either by the author or

by a scribe. This phase is for just jot down all the issues not to discuss

them in detail. If an issue needs discussion, the item is logged and then handled

in the discussion phase. A detailed discussion on whether or not an issue

is a defect is not very meaningful, as it is much more efficient to simply log

it and proceed to the next one.

The issues classified as discussion

items will be handled during discussion phase. Participants can take part in

the discussion by bringing forward their comments and reasoning. The moderator

also paces this part of the meeting and ensures that all discussed items either

have an outcome by the end of the meeting, or are defined as an action point if

a discussion cannot be solved during the meeting. The outcome of discussions is

documented for future reference.

At the end of the meeting, a

decision on the document under review has to be made by the participants,

sometimes based on formal exit criteria. The most important exit criterion is

the average number of critical and major defects found per page. If the number

of defects found per page exceeds a certain level, the document must be

reviewed again, after it has been reworked. If the document complies with the

exit criteria, the document will be checked during follow-up by the moderator

or one or more participants. Subsequently, the document can leave or exit the

review process.

5. Rework

Based on the defects detected and

improvements suggested in the review meeting, the author improves the document

under review. In this phase the author would be doing all the rework to ensure

that defects detected should fixed and corrections should be properly

implied.Changes that are made to the document should be easy to identify during

follow-up, therefore the author has to indicate where changes are made.

6.

Follow-Up

After the rework, the moderator

should ensure that satisfactory actions have been taken on all logged defects,

improvement suggestions and change requests. If it is decided that all

participants will check the updated document, the moderator takes care of the

distribution and collects the feedback. In order to control and optimize the

review process, a number of

measurements are collected by the moderator at each step of the process.

Examples of such measurements include number of defects found; number of

defects found per page, time spent

checking per page, total review effort, etc. It is the responsibility of the

moderator to ensure that the information is correct and stored for future

analysis.

1.

Walkthrough

A walkthrough is conducted by the

author of the ‘document under review’ who takes the participants through the

document and his or her thought processes, to achieve a common

understanding and to gather feedback. This is especially useful if people from

outside the software discipline are present, who are not used to, or cannot

easily understand software

development documents. The content of the document is explained step by step by

the author, to reach consensus on changes or to gather information. The

participants are selected from different departments and backgrounds If the

audience represents a broad section of skills and disciplines, it can give

assurance that no major defects are ‘missed’ in the walk-through. A walkthrough

is especially useful for higher-level documents, such as requirement

specifications and architectural documents.

The specific goals of a walkthrough

are:-

• to present the document to stakeholders both within and outside the software

discipline, in order to gather information regarding the topic under

documentation.

• To explain and evaluate the contents of the document.

• To establish a common understanding of the document.

• To examine and discuss the validity of proposed solutions and the possible

alternatives.

2.

Technical review

A technical review is a discussion

meeting that focuses on technical content of a document. it is led by a trained

moderator, but also can be led by a technical expert. Compared to inspections,

technical reviews are less formal and there is little or no focus on defect

identification on the basis of referenced documents. The experts that are

needed to be present for a technical review

can be architects, chief designers and key users. It is often performed as a

peer review without management participation.

The specific goals of a technical

review are:

• evaluate the value of technical concepts and alternatives in the product and

project environment.

• establish consistency in the use and representation of technical concepts.

• ensuring at an early stage, that technical concepts are used correctly;

• inform participants of the technical content of the document.

3.

Inspection

Inspection is the most formal review

type. It is usually led by a trained moderator (certainly not by the

author).The document under inspection is prepared and checked thoroughly by the

reviewers before the meeting, comparing the work product with its sources and

other referenced documents, and using rules and checklists. In the inspection

meeting the defects found are

logged. Depending on the organization and the objectives of a project,

inspections can be balanced to serve a number of goals.

The specific goals of an Inspection

are:

• help the author to improve the quality of the document under inspection.

• remove defects efficiently, as early as possible.

• improve product quality, by producing documents with a higher level of

quality.

• create a common understanding by exchanging information among the inspection

participants.

9. Advantages

of software testing:

1.Fast

As manual testing consumes a great

deal of time in both the process of software development as

well as during the software application testing,

automated tools are a faster option as long as the scripts which need to be done

are standard and non complex.

2.Reliability

Automation of test script execution

eliminates the possibility of human error when the same sequence of actions is

repeated again and again. Remember this can be really important as you would be

astonished to learn just how many test defects raised are in fact caused by

tester error. This particularly happens when the same boring test scripts have

to be run over and over again as well as when, at the opposite spectrum, really

complex testing has to be done.

3.Comprehensive

Automated testers might contain a

suite of tests that would help in testing each and every feature in the

application. This means that chance of missing out key parts of testing is

unlikely to occur. You might think this is unlikely to happen in reality, but I

have managed a project where in fact a key part of functionality was overlooked

by the test team.

4. Re-usability

The test cases can be used in

various versions of the software. Not only will your project management stakeholders be

very grateful for the reduced project time and cost, but it

will certainly help you when estimating.

5. Programmable

One can program the test automation software to pull

out elements of the software developed which otherwise may not have been

uncovered. Hence this should make your testing even more thorough, something

you may not be so keen on when defect after defect is raised as a result!

*Source for writing this blog:

1. Google

2. Wikipedia

3. Tutorial point

4. David Varnon Software engineering.

5. Melsatar Software

methodologies.

10. Contact:

LinkedIn: http://in.linkedin.com/pub/sourav-poddar/6b/542/a30

or mail me at

*Need your suggestions

and feedback, so that I can improve my way and ideas for coming blogs and hope

that you appreciate it J

Thank YOU!!!

MR. SOURAV PODDAR,

ReplyDeleteyour article for office 365 is helpful and good so request you to continue the part.

-

Lorean Krik.

Thank you for the nice article here. Really nice and keep update to explore more gaming tips and ideas.

ReplyDeleteGame Testing Compaies

Android Game Tester

Game Automated Testing

Gameplay Testing

Thank you for the nice article here. Really nice and keep update to explore more gaming tips and ideas.

ReplyDeleteGame Testing Company

Video Game Testing Company

Mobile Game Testing

Focus Group Testing

Game QA Testing

Thank you for the nice article here. Really nice and keep update to explore more gaming tips and ideas.

ReplyDeleteGame Testing Services

Video Game Tester

IOS Game QA Tester

Game Security Testing

Game QA

Very nice article here you had posted. Thank you for this one and please keep update like this.

ReplyDeleteConsole Game Testing

Game Testing Company

Video Game QA

Thank you so much for this nice information. Hope so many people will get aware of this and useful as well. And please keep update like this.

ReplyDeleteVarious Stages of Game Testing Techniques you need to know

7 Essential Tips for Successful QA Implementation

Types of Game Testing Processes that need to be followed

How Game Testing differs from Software Testing

6 Challenges that every Game Tester Faces

9 Critical Bugs to be Identified in Game Testing process

Is the age of AAA gaming dying?

Major Mobile Game Testing Concerns for Testers

Game Testing Trends to watch out for in 2020

Thank you so much for this nice information. Hope so many people will get aware of this and useful as well. And please keep update like this.

ReplyDeleteVarious Stages of Game Testing Techniques you need to know

7 Essential Tips for Successful QA Implementation

Types of Game Testing Processes that need to be followed

How Game Testing differs from Software Testing

6 Challenges that every Game Tester Faces

9 Critical Bugs to be Identified in Game Testing process

Is the age of AAA gaming dying?

Major Mobile Game Testing Concerns for Testers

Game Testing Trends to watch out for in 2020

Graceful written content on this blog Software Testing Services Company is really useful for everyone same as I got to know. Difficult to locate relevant and useful informative blog as I found this one to get more knowledge but this is really a nice one.

ReplyDeleteGraceful written content on this blog QA Testing Services Company is really useful for everyone same as I got to know. Difficult to locate relevant and useful informative blog as I found this one to get more knowledge but this is really a nice one. Read more

ReplyDeleteSoftware testing companies in USA

Software testing companies in New York

Software testing company in India

Software testing services in USA

Software testing services company

Software testing services company in India

Awesome article, it was exceptionally helpful! I simply began in this and I'm becoming more acquainted with it better! Cheers, keep doing awesome!

ReplyDeleteSoftware Testing Services

Software Testing Services in USA

Software Testing Companies in USA

Software Testing Companies

Software Testing Services Company

Software Testing Services in India

Software Testing Companies in India

Great post! Thank you for sharing valuable information. Keep up the good work..

ReplyDeletesoftware testing class in chennai

Thanks for your great and helpful presentation about Software Testing Services I like your good service. I always appreciate your post. That is very interesting I love reading about Software Testing Company and I am always searching for informative information like this.

ReplyDeleteConsult today to - Software Testing Companies in USA

See Also: Test Automation Services

شركة مكافحة حشرات بالجبيل

ReplyDeleteشركة تسليك مجارى بالجبيل

شركة عزل اسطح بالجبيل

شركة كشف تسربات المياه بالجبيل

شركة لمسات الابداع